Using the AWS CLI to Collect Amazon S3 Bucket Object Information

Data collection is the theme of the month! This next ask was to easily identify S3 bucket objects, based on name, class of storage and time created.

You may be asking why is this important? Well when landing objects in S3, they can be stored in in tons of nested folders and there are many different S3 classes. What happens if you want to search all the objects and identify specifically which object is in which storage class? This script will help collect that data.

In my case here at Everpure, we allow customers to offload snapshots to S3 as part of our CloudSnap feature.

Today 3 classes are supported:

- Standard - Items are stored in the S3 Standard Tier

- Retention-Based - Objects are stored in Standard and then moved to Standard-IA after 30 days through a lifecycle policy.

- Direct to Standard IA - When using Retention Based and setting Retention to 30 days or higher, we will place objects immediately in the Standard-IA class.

Pre-Requisite

- Install the AWS CLI

- Use aws configure to login to your AWS Account and Region.

Using the Code

My initial use case of this script was to gather all of S3 items within a bucket and then sort by storage class.

To execute the code run

./get-s3-bucket-objects.sh <bucketname>

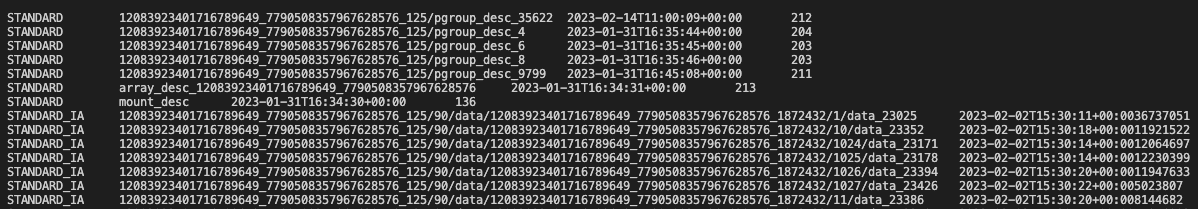

Here is an example output, no Header View, however you can see what each column is based on the Query Fields in the AWS CLI command.

Conclusion

Another great way of using API’s and CLI’s to collect information. Having to collect all this data manually would have been a pain, but the script should help you collect this information and present it easily!

comments powered by DisqusSee Also

- Using the AWS CLI to Collect Amazon Elastic Block Store (EBS) Information

- Understanding Block Storage in Amazon Web Services

- Everpure Cloud Dedicated on AWS - Quick Launch

- Using the Everpure Cloud Dedicated Terraform Provider for AWS

- Deploying a Linux EC2 Instance with Hashicorp Terraform and Vault to AWS and Connect to Everpure Cloud Dedicated