Another lovely Friday starting off with strange issues, when taking a host out of standard maintenance we noticed that HA would not reconfigure, and kept saying The object ‘vim.Datastore:datastore-1131’ has already been deleted or has not been completely created. This was strange because no other hosts had this issue.

I proceeded to follow standard troubleshooting steps, reconfigure HA…same issue….disable HA for entire cluster and …

Read More

So today I successfully passed the VMware Certified Advanced Professional 6.5 – Data Center Virtualization Design Exam 3V0-624!

I had set a personal goal at the beginning of the year, that I would pass the VCAP6-DCV Design and unfortunately after months of studying I took it and failed in March. I was ready to give up based on the cost of the exam and the time i spent studying. However, I found out recently that a new version based on 6.5 was released in August and the Visio like design sections were dropped (which were the biggest pain), so I worked to acquire a voucher from work and decided …

Read MoreSo it seems to be that time of year again with new projects coming around. This time its all about vRA. This is probably my first of many blogs to come about things I have come across.

My question today was how do I pre-create a computer AD account, join a device to the domain and then clean it up when the machine is destroyed.

Turns out this is super simple in vRealize Automation 7.3!

Read MorevSphere 6.5U1 was released on July 27th. This is an incremental update a lot of people wait for before upgrading from a previous version. I have been lucky to have been running on vSphere 6.5 for quite some time and have been enjoying it very much.

Obviously we never upgrade production first, if we can help it. I decided to attempt an upgrade on one of our QA vCenters that was deployed using the VCHA Advanced workflow. I followed my standard upgrade steps Updating a VCHA 6.5 vCenter however when I went to failover the Active node to the upgraded passive/witness nodes, services would not start, …

Read MoreI have been working on doing a vCenter Consolidation Project. This included migrating our systems to a new vRealize Operations Server. This particular one included 73 different vSOM keys, and as you may know there is currently no way to enter in multiple keys at a time. I reached out to Kyle Ruddy and he informed me there is an API to do this, and that started my adventure to get this working.

Read More

I have been working on doing a vCenter Consolidation Project. This has meant recreating multiple permissions groups. I couldnt find an easy way to apply permissions at a datacenter so I updated this script to be used.

Pre-Requsites

Link to Script

Preparing to Execute the Script

The script is pretty straight forward, just need to update columns in the CSV such as Datacenter, Group and Role.

Read More

Warning:

A few others and myself have noticed that when updating a VCHA Cluster to 6.5U1 it resets the hostname to localhost.localdomain stopping vCenter Web Client from Loading.

It seems to be isolated to Advanced Deployments and not Basic. I would recommend destroying your VCHA Cluster, updating, then redeploying in this scenario.Warning2:

It also seems that if you try to redeploy VCHA Advanced after an upgrade, it still resets the hostname, I have a case opened and will update here as neccessary.

The first vSphere 6.5 Patch (6.5a) was just released! So far it seems there are a few …

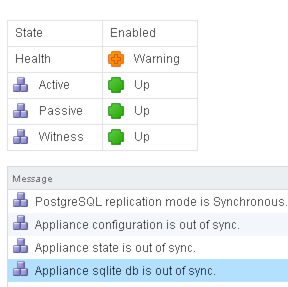

Read MoreOne of my favorite new features of vSphere 6.5 is definately vCenter HA. I was checking out alerts when I noticed i had an alarm for vCenter HA being degraded.

Looking through the GUI, I was unable to find anything in regards to why the Health was a warning and why the components would not sync. Then I remembered a previous case I had opened for VCHA.

There is a log file located in /var/log/vmware/vcha

Read MoreI had a use case I wanted to investigate in regards to the new VCHA that is part of VMware vCenter Server 6.5. One thing I noticed, is when you have VCHA configured, there is no way to know which node of the HA cluster you are connecting to without being logged on.

As people have noted before William Lam’s - How to Customize Webclient Login UI

I figured since I am using the embedded SSO, I could update the login page to show any information for that particular node. In my case I updated the text to show which node you are connected to. You can see this without even logging in!

Read MoreSo VMware Flings have been on a roll recently. They have released what they call “VMware Content Library Assistant.” This is a java based CLI app, that connects to your vCenter and searchers for your templates and then automatically creates and uploads them.

You can find the fling at the following URL…https://labs.vmware.com/flings/vsphere-content-library-assistant)

Once you download the fling, you can just run the following command.

java -jar sphere-content-library-assistant-1.0.jar -s servername -u username -p passwordIn my case my java home isn’t setup on my mac so …

Read More